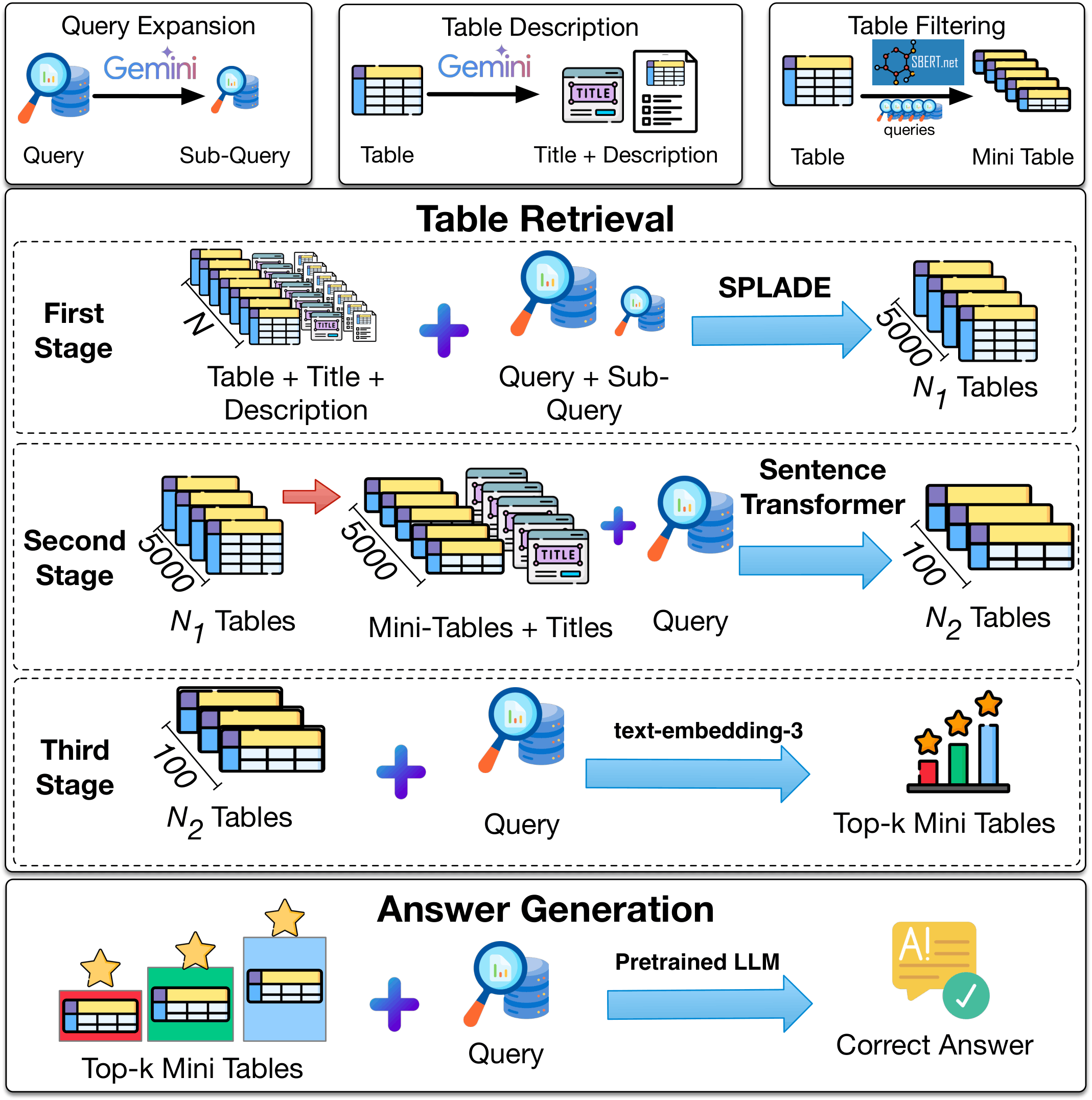

The CRAFT Pipeline

Enriching Queries & Tables

Query Expansion

Gemini Flash 1.5 generates sub-questions that decompose the original query for better lexical coverage.

Table Enrichment

Each table receives an LLM-generated title and a natural-language summary, bridging the lexical gap.

Mini-Table Construction

Sentence Transformer selects the top-5 most query-relevant rows per table, reducing context size 5×.

Figure 1: CRAFT three-stage cascaded retrieval pipeline with preprocessing and answer generation.

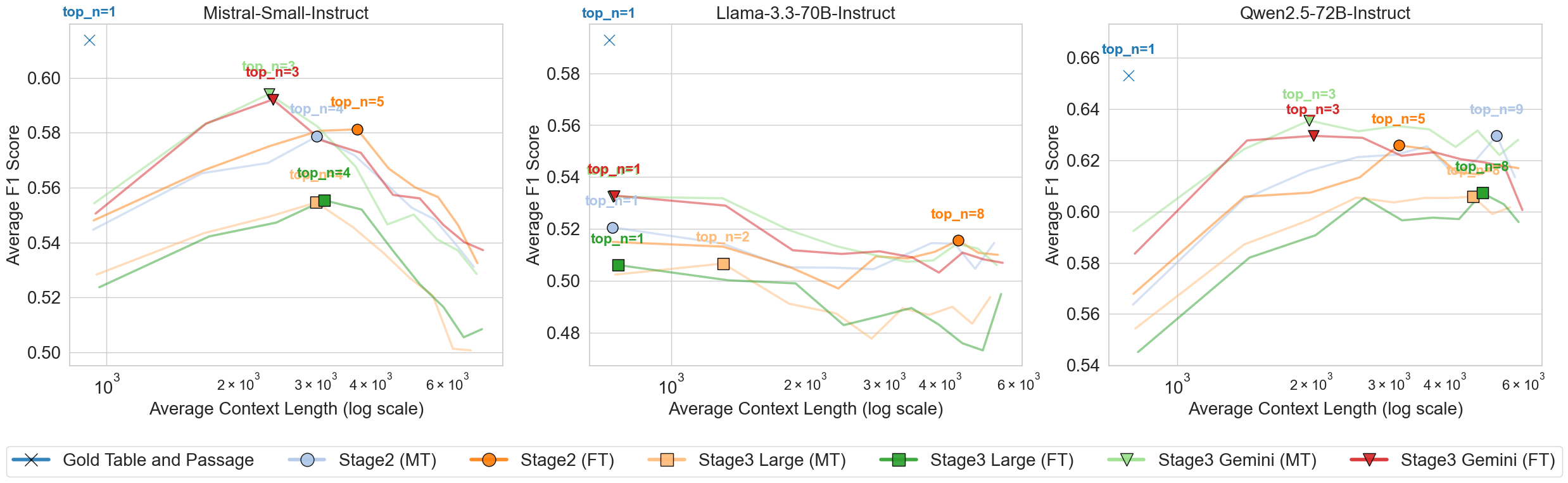

Progressively Precise Reranking

Sparse Retrieval

SPLADE indexes enriched table representations (table content + title + description) and retrieves the top 5,000 candidates from the full corpus via sparse lexical matching.

Dense Reranking

Sentence Transformer (all-mpnet-base-v2 / Jina v3) scores mini-tables against the expanded query, narrowing 5,000 candidates down to the top 100.

Fine-Grained Reranking

OpenAI text-embedding-3 (small/large) or gemini-embedding-001 performs high-precision reranking of the 100 candidates to select the final top-k tables.

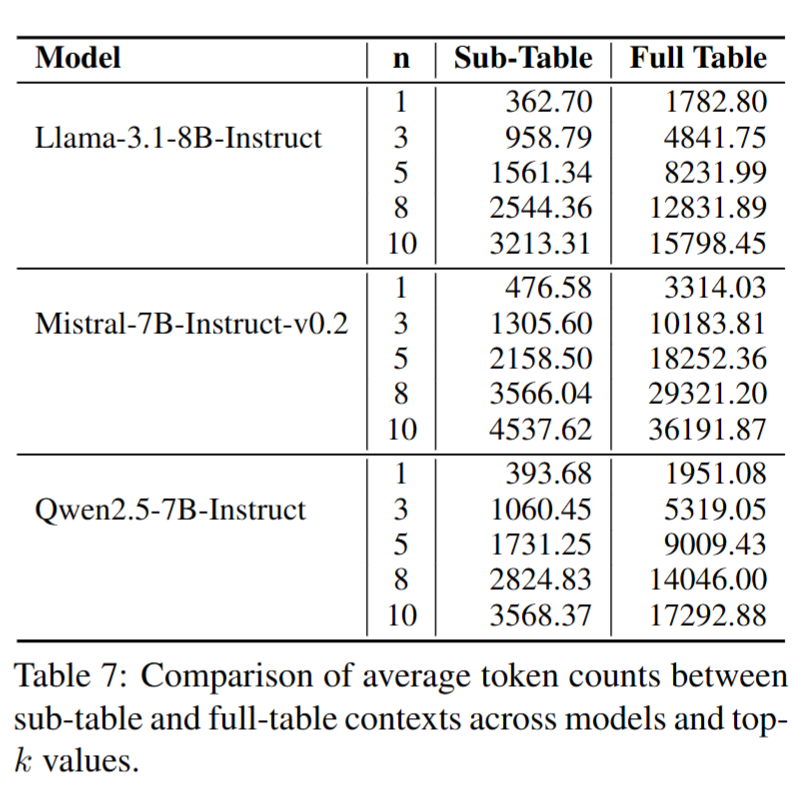

LLM Reading over Mini-Tables

The top-n mini-tables retrieved by Stage 3 are concatenated and passed to an LLM (Llama-3.1-8B, Qwen2.5-7B, Mistral-7B, GPT-4o, etc.) to generate the final answer. Using mini-tables instead of full tables reduces token consumption by up to 5× while preserving the most relevant evidence rows.

.png)